Prove AI ROI with Execution Data

Not a survey. Not self-reported adoption. Measured from actual execution patterns. See whether AI tools are improving speed, accuracy, and throughput — with data you can defend to the board.

The AI Measurement Gap

Enterprises are spending billions on AI. Most cannot answer a basic question: is it working?

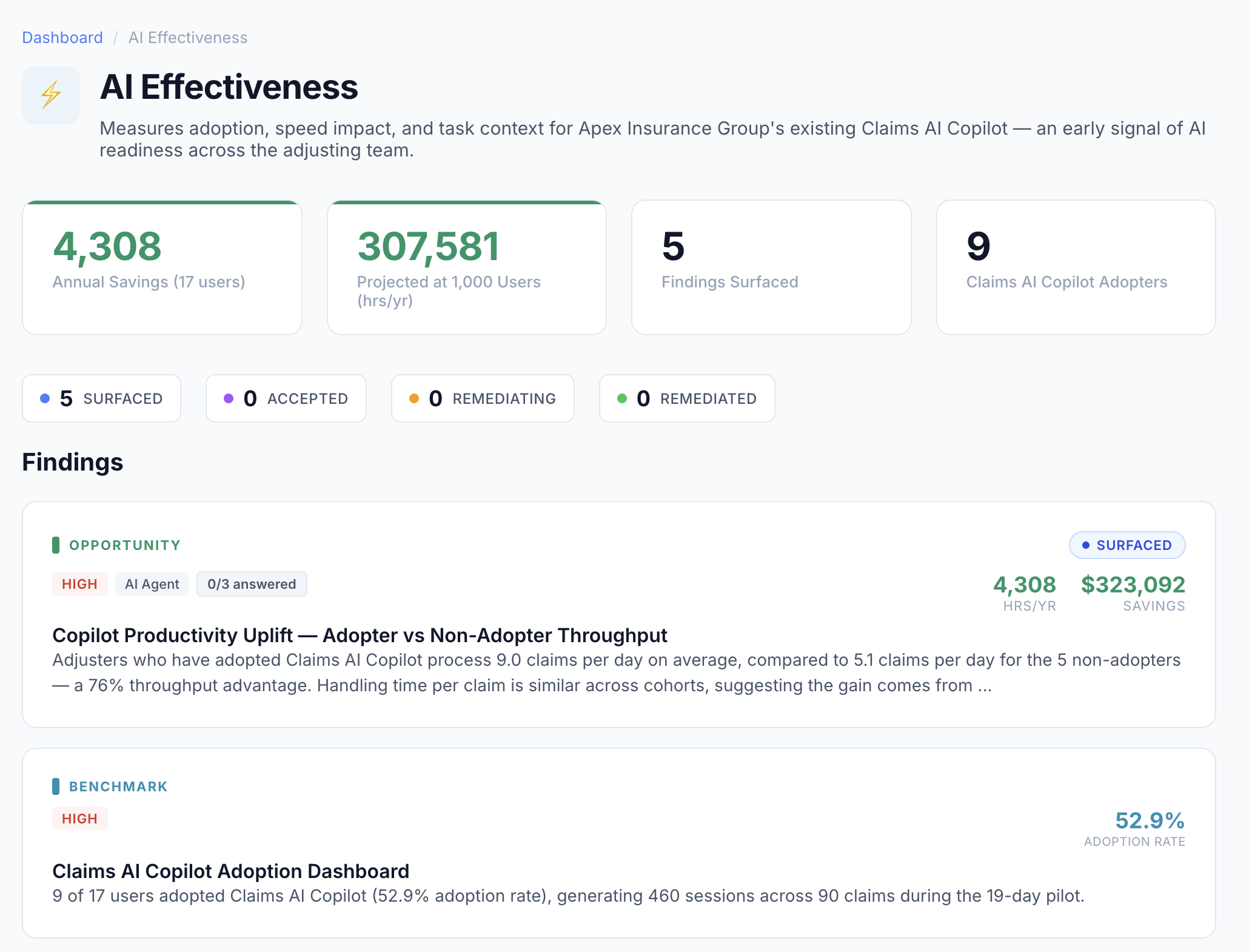

76%

Higher throughput measured for AI copilot adopters vs. non-adopters in real enterprise deployments

52%

Typical adoption rate — meaning nearly half the workforce isn't using the AI tools you've deployed

0

Number of enterprises that can typically prove AI ROI with execution-level data before Pyze

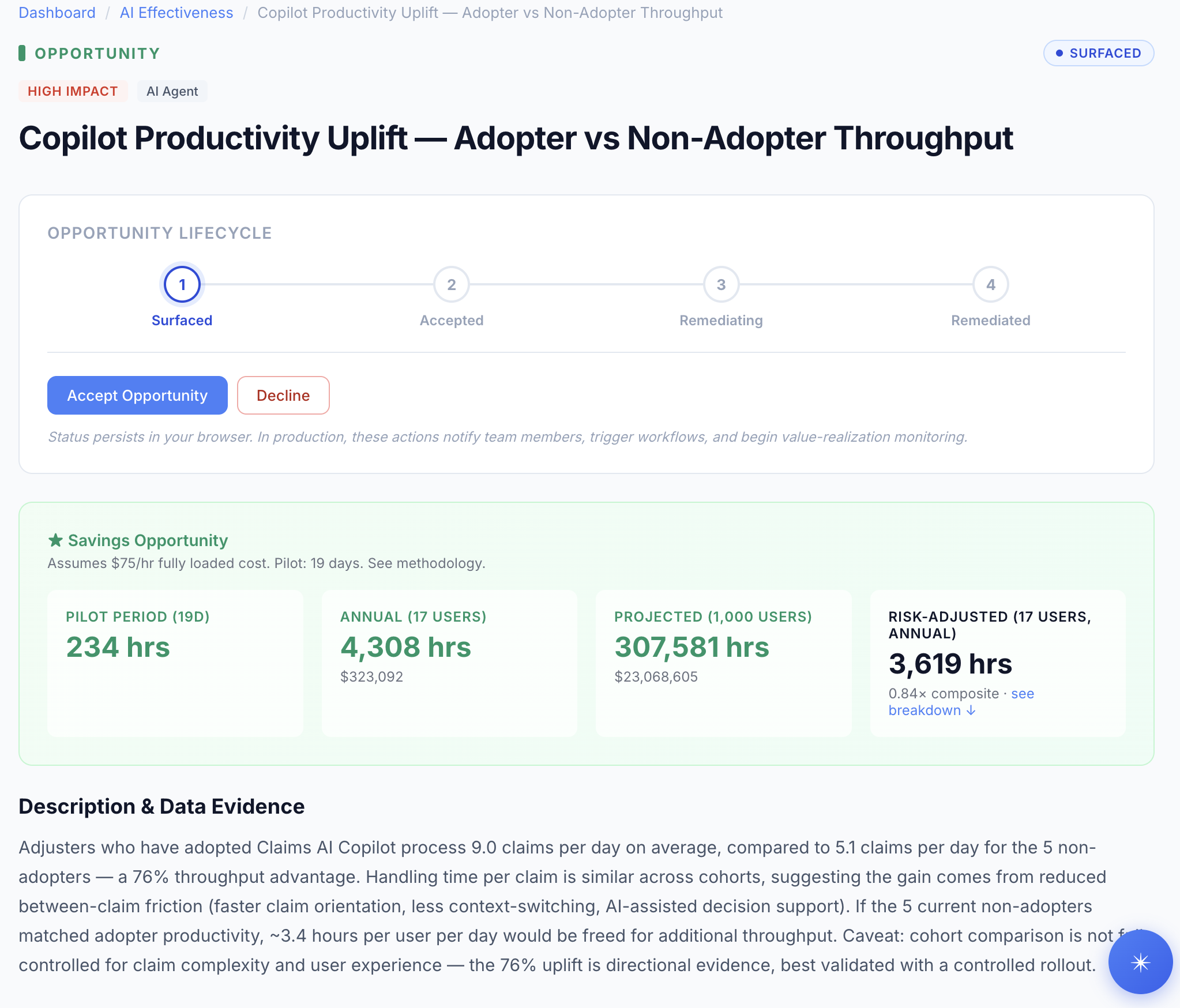

Adopter vs. Non-Adopter Analysis

The AI Effectiveness agent automatically segments your workforce into adopters and non-adopters, then compares real execution metrics between the two cohorts.

What Gets Measured

- › Adoption rates — who is actually using AI tools

- › Throughput comparison — cases/tasks per day by cohort

- › Time-to-completion before vs. after AI

- › Accuracy and rework rate differences

- › Workflow behavior changes post-adoption

What You Can Prove

- › Quantified productivity lift from AI tools

- › Adoption gaps — where investment isn't landing

- › ROI projections at scale (from pilot to enterprise)

- › Which AI tools work and which don't

- › Training priorities to close adoption gaps

The AI Effectiveness Agent

A dedicated analytical engine for measuring whether AI investments are delivering returns — with both opportunity findings and benchmark metrics.

From Measurement to Action

AI Effectiveness isn't a one-time report. It's a continuous loop: measure adoption, identify gaps, take action, measure again.

Measure

Capture AI adoption and impact from real execution data

Identify

Surface adoption gaps and underperforming AI tools

Act

Target training, adjust tools, or redeploy agents

Validate

Confirm improvements with before/after execution metrics

Stop Guessing Whether AI is Working

Deploy Pyze's AI Effectiveness agent and get real measurement from real execution data.